3 Design Patterns for Token Economics

Stop letting bloated prompts bankrupt your cloud bill. How to architect a lean AI swarm and protect your margins.

In 2008, Robert C. Martin published Clean Code, establishing that poorly written software wasn’t just an aesthetic problem - it was a systemic drain on a company’s time, resources, and survival.

Today, we are facing the exact same crisis in the Agentic Stack. But this time, the cost of “dirty code” is immediate and financial.

In traditional software, if a junior developer writes a wildly inefficient loop, the CPU just runs a little hotter. You pay a fractional premium on your AWS compute bill.

AI is fundamentally different. Tokens = Compute = Dollars.

AI is the first software paradigm where the verbosity of your architecture scales your infrastructure costs linearly. If you build an AI feature that passes the user’s entire 50-page history, a massive system prompt, and 10 large RAG chunks into every single API call, you are committing financial suicide. When you scale from 500 users to 50,000 users, that inefficient “Mega-Prompt” will bankrupt your product.

CTOs are looking at $40,000 monthly OpenAI bills for features that barely move the needle.

To survive the AI transition, we have to stop treating LLMs like an infinite buffet. We must architect for Token Dieting.

Just as we codified the structural patterns for AI swarms, here are the three definitive design patterns for Token Economics.

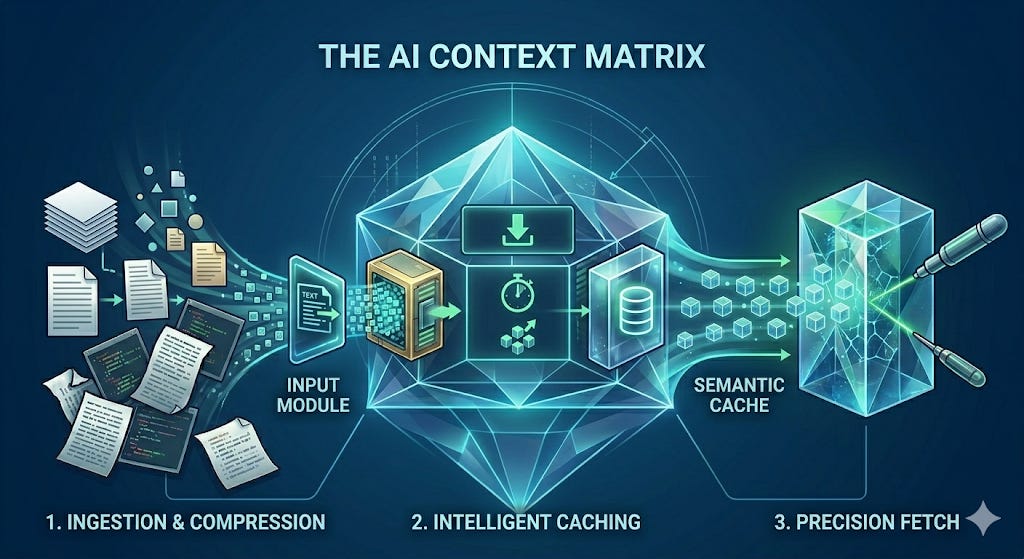

1. The Semantic Cache Pattern (The DRY Principle for AI)

The Concept: Don’t pay a frontier model to answer the exact same question twice.

The Inspiration: The DRY Principle (Don’t Repeat Yourself).

The Problem: If you have an internal HR bot, 300 different employees are going to ask, “What is the policy for rolling over PTO?” this month. If you pass that query to GPT-4o or Claude 3.5 Sonnet every single time, you are paying a premium reasoning model to generate the exact same text 300 times. This is throwing money into a furnace.

The Solution:

You place a Semantic Cache directly in front of your LLM router. When a user asks a question, you do not immediately call the LLM.

You pass the query through a hyper-cheap embedding model (costing fractions of a cent).

You check a fast vector database (like Redis or Qdrant) for a semantic match.

If an employee asks, “How do I carry over my vacation days?” the cache recognizes it has a 96% semantic similarity to the PTO question answered 10 minutes ago.

It intercepts the call and instantly returns the cached answer.

The ROI: Zero calls to the expensive reasoning model. Time-To-First-Token (TTFT) drops from 2 seconds to 50 milliseconds. Your AI feels instantly responsive, and your token cost for that interaction is functionally zero.

2. The Context Compressor Pattern (MapReduce for Prompts)

The Concept: Never force your CEO (the expensive model) to read the raw data.

The Inspiration: The MapReduce algorithm / The Executive Briefing.

The Problem: You are building an agent that analyzes GitHub pull requests and Jira tickets to generate a weekly engineering report. Your pipeline blindly dumps 60 pages of raw JSON, commit logs, and comment threads directly into your most expensive model. You are paying frontier-model prices to process structural boilerplate.

The Solution:

You implement a multi-model routing swarm. You deploy a hyper-fast, incredibly cheap “Gatekeeper” model (like Llama-3-8B or Claude 3 Haiku).

The Gatekeeper ingests the massive 60-page raw data dump.

Its only job is to aggressively summarize, extract the key entities, and format them into a dense, 500-token markdown brief.

You then pass only that 500-token summary to your expensive “Executive” model (like GPT-4 or Claude Opus) to perform the deep reasoning and write the final report.

The ROI: You reduce your input tokens by 90%. You use the $0.25/1M token model for the heavy lifting (reading), and the $15.00/1M token model strictly for the high-IQ logic.

3. The Precision Fetch Pattern (Lazy-Loading for Agents)

The Concept: Fetch only the exact node of information required, when it is required.

The Inspiration: Lazy-Loading in front-end development.

The Problem:

Standard Retrieval-Augmented Generation (RAG) is a shotgun approach. A user asks a question, and the vector database blindly fetches the top 10 closest text chunks (often 5,000+ tokens) and force-feeds them into the prompt, hoping the answer is in there somewhere. Half of that context is irrelevant, confusing the LLM and inflating your bill.

The Solution:

This ties directly back to our L1/L2/L3 Memory Architecture. We strip the L1 Working Memory down to almost nothing. Instead of force-feeding the agent, we give it a strict Tool Call to query the L3 Core Memory.

Because the agent is intelligent, it doesn’t just do a blind semantic search. It writes a precise metadata filter or a Graph Cypher query.

Instead of pulling the entire “Q3 Financials” document, the agent executes: fetch_revenue(quarter="Q3", region="EMEA"). The system returns exactly one sentence: “EMEA Q3 Revenue was $4.2M.” (10 tokens).

The ROI: You transform a 5,000-token shotgun blast into a 10-token sniper shot. Not only do your unit economics drastically improve, but you also eliminate the “Lost in the Middle” hallucination phenomenon because the agent’s context window is flawlessly clean.

The Executive Mandate: Architect, Don’t Prompt

We have to stop treating LLM API calls like magic text boxes where we just cross our fingers and hope for the best.

If your engineering team’s solution to every hallucination or reasoning failure is “just add more instructions to the system prompt,” they are actively building technical and financial debt.

To build an enterprise-grade AI product that actually makes financial sense, you must treat your context window as your most precious, expensive resource.

Implement Semantic Caching to stop paying for the same answer twice. Use cheap models as Context Compressors. And enforce Precision Fetching to keep your L1 memory ruthlessly clean.

Clean code saved the software industry. Clean context will save the AI industry.

🚀 [Apply for the Flurit.ai Early Adopter Design Program to test the Shadow Swarm in your environment]