How Agents Talk to Agents: The A2A Protocol

The Omni-Agent is dead. Welcome to the era of Contract-Based Swarms, infinite loop prevention, and the Agent-to-Agent (A2A) Architecture.

Over the last few weeks on The Agentic Stack, we have systematically secured the internal state of our agentic architectures. We built Zero-Trust API sidecars to protect our databases, we implemented the Human API to govern high-stakes execution, and we deployed Redis Mutex locks to prevent asynchronous background workers from corrupting our enterprise data during concurrent operations.

Our internal house is finally in order. We have tamed the LLM.

But the ecosystem outside our walls is shifting at an unprecedented velocity. Over the last thirty days, we have witnessed a massive tectonic shift in how the hyper-scalers are positioning enterprise AI. With Microsoft announcing general availability for Agent-to-Agent (A2A) capabilities in Copilot Studio, IBM rolling out their multi-agent orchestration platforms at Think 2026, and Salesforce aggressively pushing cross-platform “Agentforce” workers, the industry has universally agreed on one undeniable architectural reality:

The Omni-Agent is a myth.

You are not going to build one massive, omnipotent LangGraph agent that holds a 10-million token context window and inherently knows how to query Salesforce, manage NetSuite, provision AWS infrastructure, and resolve HR disputes. The “God Model” approach is a catastrophic anti-pattern that leads to unmanageable latency, astronomical token costs, and security nightmares.

Instead, the future of enterprise AI is Micro-Agents.

Just as monolithic applications fractured into microservices a decade ago, monolithic AI is fracturing into specialized, domain-isolated swarms. Your custom LangGraph IT agent is going to need to negotiate with a proprietary Salesforce HR agent to resolve an employee onboarding ticket.

But how do they actually communicate?

If your answer is “They will just prompt each other using natural language,” your entire infrastructure is a ticking time bomb. Today, we are tearing down unstructured agent communication and architecting the Agent-to-Agent (A2A) Protocol. We are going to apply hardcore distributed systems principles—Machine-to-Machine JWT authentication, the Saga Pattern for distributed rollbacks, and Network TTLs—directly to our AI swarms.

Let’s dissect how to make agents talk safely.

Chapter 1: The Unstructured Chat Nightmare

When developers first attempt to connect two agents, they almost always default to the most dangerous API pattern imaginable: Natural Language passing. Because LLMs are designed to process human text, junior engineers assume that the easiest way to bridge two systems is to just pipe the output of Agent A directly into the prompt of Agent B.

Imagine your internal IT Agent (Agent A) needs the Salesforce HR Agent (Agent B) to verify a user’s department before it provisions a GitHub Enterprise license.

The Flawed Architecture:

Agent A formulates a thought process, generates a string of text, and sends it as an HTTP POST to Agent B:

“Hello HR Agent, I am the IT Agent. Please look up Ashu Kumar and tell me if he is in the engineering department so I can provision his GitHub access. Thank you.”

Agent B processes this string. But because it is an LLM running on a probabilistic transformer model, its response is non-deterministic. It doesn’t return a boolean true. It returns conversational prose:

“Sure thing! I checked the directory and Ashu Kumar is indeed an engineer. I can help you with other employee records too, what else do you need today?”

Agent A receives this response. It parses the text, realizes the objective is complete, but gets confused by the open-ended question at the end. It replies out of conversational obligation:

“I don’t need anything else right now, just confirming the GitHub access. Thank you for your help.”

Agent B: “You are very welcome! Let me know if you need anything else in the future.”

Agent A: “Will do.”

Agent B: “Great.”

Congratulations. You have just triggered an Agentic Infinite Loop.

Two asynchronous, highly-capable LLMs are now trapped in an endless cycle of polite conversation. Because neither agent possesses a strict terminal state for “small talk,” they will continue to ping-pong pleasantries back and forth until they hit a rate limit. You are now burning $50 an hour in API compute costs, your IT provisioning queue is completely blocked, and your infrastructure is hanging.

Agents cannot communicate using unstructured “vibes.” They must communicate using strict, deterministic contracts.

Chapter 2: The Contract-Based Swarm (A2A Architecture)

To fix this, we have to treat Agent B (the external agent) exactly like a traditional REST API, but with an intelligent routing layer. We must bridge the probabilistic world of the LLM with the deterministic world of enterprise software.

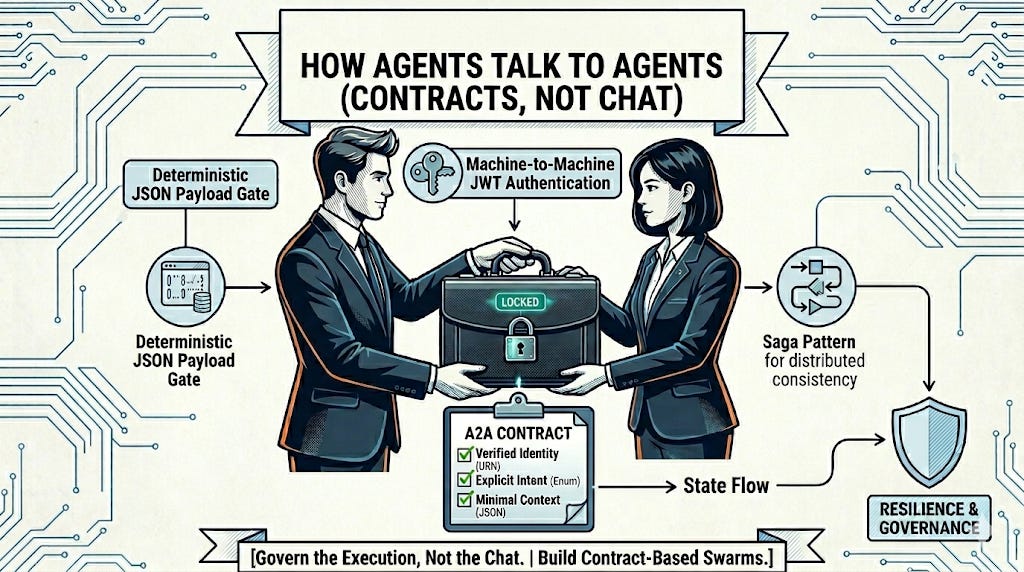

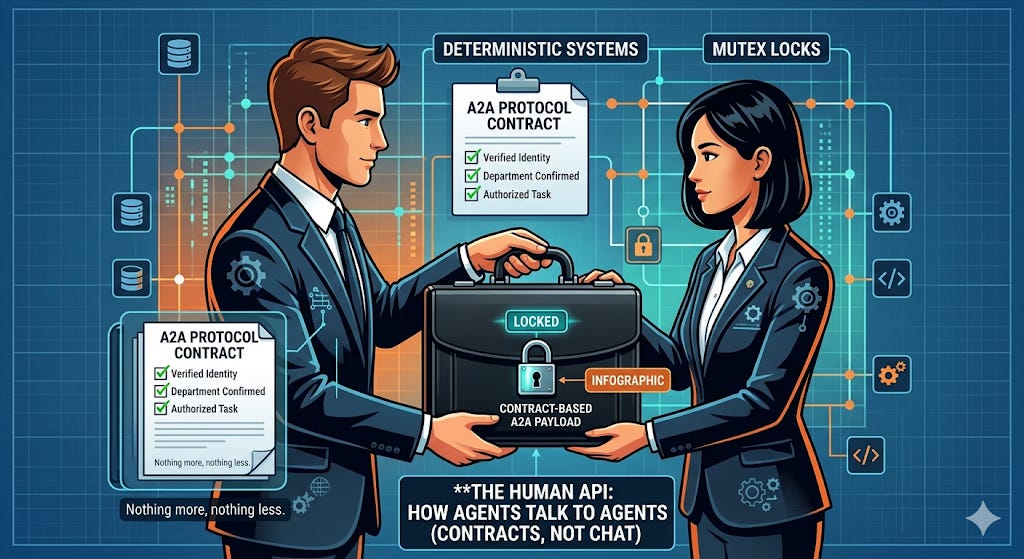

In the A2A Protocol, agents do not send each other chat messages. They send Intent Payloads.

When Agent A needs information from Agent B, it uses a specific tool call (often defined via the Model Context Protocol or strict JSON Schema) to generate a machine-readable request. This schema acts as the “Contract.”

The A2A payload must contain three distinct elements:

The Origin Identity: Cryptographic proof of who is asking (e.g.,

urn:agent:internal_it_provisioner).The Intent: A structured enumeration of what is being requested (e.g.,

VERIFY_EMPLOYEE_DEPARTMENT).The Context Boundary: The exact parameters needed, with zero conversational fluff (e.g.,

{"email": "ashu@company.com"}).

Stop thinking of inter-agent communication as a Slack channel. Start visualizing it as two agents passing a locked briefcase. Inside the briefcase is a strict, single-page JSON form with exactly three key-value pairs filled out. Nothing more, nothing less.

Chapter 3: The Authentication Layer (Machine-to-Machine JWTs)

Before we even look at the payload, we must address the most glaring security flaw in multi-agent swarms: Trust.

If an incoming API request says “I am the IT Agent, please give me the payroll data,” your HR Agent cannot simply take that string at face value. Prompt injection and internal actor spoofing are rampant. In a true enterprise environment, AI agents require Service Accounts and cryptographic identity verification.

We must implement Machine-to-Machine (M2M) authentication using JSON Web Tokens (JWTs) scoped specifically for agentic execution.

When your LangGraph IT Agent wakes up to execute a multi-step DAG, your orchestrator must mint a short-lived JWT for that specific run. This token contains claims about what the agent is allowed to do.

JSON

// Example of an Agentic JWT Payload

{

"iss": "https://auth.yourcompany.com",

"sub": "agent_id_9981_it_provisioning",

"aud": "agent_swarm_internal",

"roles": ["read:employee_directory", "write:github_licenses"],

"exp": 1715000000,

"a2a_thread_id": "req_55992"

}

When Agent A calls Agent B, it passes this JWT in the Authorization: Bearer header. Agent B’s FastAPI sidecar validates the cryptographic signature of the token before the LLM is ever invoked. If a rogue marketing agent tries to ask the HR agent for payroll data, the API gateway rejects the token because the roles claim does not contain read:payroll. The LLM never even sees the malicious prompt.

Identity is enforced at the network layer, not the cognitive layer.

Chapter 4: Building the Deterministic A2A Handshake

Let’s look at how to implement this A2A gateway in Python. If you are exposing your internal LangGraph agent to be consumed by an external system (like Microsoft Copilot or a partner company’s AI), you must build a deterministic API gate in front of your graph.

Here is the blueprint for a hardened Agentic Router:

Python

from fastapi import FastAPI, HTTPException, Security

from fastapi.security import HTTPBearer, HTTPAuthorizationCredentials

from pydantic import BaseModel, Field

from typing import Optional

app = FastAPI()

security = HTTPBearer()

# 1. Define the strict A2A Contract Schema (The Briefcase)

class A2ARequest(BaseModel):

intent: str = Field(..., description="The specific action requested.")

parameters: dict = Field(..., description="Strict JSON payload matching the intent schema.")

max_hops: int = Field(default=3, description="Network TTL for infinite loop prevention.")

class A2AResponse(BaseModel):

status: str

deterministic_data: Optional[dict] = None

agent_reasoning: Optional[str] = None

# Mock JWT Validator

def verify_agent_token(credentials: HTTPAuthorizationCredentials = Security(security)):

# Cryptographic verification logic here

# Returns the Agent Principal (e.g., {"agent_id": "it_bot", "roles": [...]})

return {"agent_id": "verified_it_agent", "roles": ["read:directory"]}

# 2. The Deterministic API Gate

@app.post("/api/v1/a2a/execute", response_model=A2AResponse)

async def handle_agent_request(

payload: A2ARequest,

principal: dict = Security(verify_agent_token)

):

# Step A: Enforce RBAC against the Intent

if payload.intent == "VERIFY_DEPARTMENT" and "read:directory" not in principal["roles"]:

raise HTTPException(status_code=403, detail="Agent lacks required scope.")

# Step B: Route to the specific Graph Node based on INTENT, not chat

if payload.intent == "VERIFY_DEPARTMENT":

# We bypass the LLM "understanding" phase entirely.

# We inject the structured parameters directly into our graph's state machine.

graph_state = {"user_email": payload.parameters.get("email")}

# Invoke our internal HR agent graph

result = hr_agent_graph.invoke(graph_state)

# Step C: Return a strict, contract-bound response. No pleasantries.

return A2AResponse(

status="success",

deterministic_data={"department": result["department"], "is_active": True},

agent_reasoning="User found in active directory lookup."

)

else:

raise HTTPException(status_code=400, detail="Unknown Intent Contract")

Notice exactly what is happening here. The external agent is not allowed to talk directly to our internal LLM. It hits a deterministic FastAPI router. The router validates the JWT, parses the structured JSON, ensures the scopes match, and then injects the state into our internal LangGraph. Once the graph completes its internal reasoning and database queries, the router formats the output back into a strict JSON envelope.

We have completely eliminated the risk of conversational hallucination, scope creep, and infinite pleasantries.

Chapter 5: The Saga Pattern (Handling Multi-Agent Rollbacks)

We have solved communication and authentication. But distributed systems introduce a much darker problem: Partial Failures.

Imagine a highly complex “Employee Offboarding Agent” (Agent A). Its DAG dictates that it must:

Ask the IT Agent (Agent B) to revoke AWS access.

Ask the Finance Agent (Agent C) to lock the corporate credit card.

Ask the HR Agent (Agent D) to terminate the Workday profile.

What happens if Agent B and Agent C succeed, but Agent D fails because the Workday API is down?

You now have a fractured enterprise state. The employee cannot access AWS, their credit card is frozen, but they are still technically employed and receiving payroll in Workday. In traditional SQL databases, we use Two-Phase Commits (2PC) to rollback the whole transaction. But you cannot do a 2PC across disparate, asynchronous AI agents operating on different SaaS platforms.

You must implement the Saga Pattern.

The Saga Pattern dictates that every time an agent executes a state-mutating action, it must also expose a Compensating Transaction—a specific tool designed purely to undo what it just did.

If Agent A orchestrates a multi-agent workflow and encounters a critical failure downstream, it must autonomously trigger the compensating transactions of the agents that already succeeded.

Python

# Inside the Offboarding Orchestrator Agent (Agent A)

def execute_offboarding_saga(employee_id: str):

executed_steps = []

try:

# Step 1: Revoke AWS (Agent B)

a2a_client.post("agent_b", intent="REVOKE_AWS", params={"id": employee_id})

executed_steps.append({"agent": "agent_b", "undo_intent": "RESTORE_AWS"})

# Step 2: Lock Card (Agent C)

a2a_client.post("agent_c", intent="LOCK_CARD", params={"id": employee_id})

executed_steps.append({"agent": "agent_c", "undo_intent": "UNLOCK_CARD"})

# Step 3: Terminate Workday (Agent D) -> THIS FAILS

a2a_client.post("agent_d", intent="TERMINATE_HR", params={"id": employee_id})

except A2A_FailureException as e:

# THE SAGA ROLLBACK INITIATES

print(f"Workflow failed at Step 3. Initiating compensating transactions.")

# The agent autonomously works backward through its memory

# to undo the fractured state.

for step in reversed(executed_steps):

a2a_client.post(

step["agent"],

intent=step["undo_intent"],

params={"id": employee_id}

)

raise Exception("Offboarding aborted and state successfully rolled back.")

By enforcing the Saga pattern, you ensure that your swarm maintains Eventual Consistency. If an agentic workflow cannot complete entirely, the swarm is intelligent enough to clean up its own mess rather than leaving orphaned database records scattered across your enterprise.

Chapter 6: The Max-Hop Firewall and Dead Letter Queues

Finally, we must address the most terrifying edge case of autonomous swarms: recursive delegation.

Look back at the A2ARequest schema in Chapter 4. You will notice a parameter called max_hops: int = 3.

In standard microservice architectures, we use distributed tracing headers (like Jaeger or OpenTelemetry) to ensure a request doesn’t bounce endlessly between services in a routing loop. In Agentic AI, this is exponentially more critical because agents possess agency—they can autonomously decide to spawn new sub-tasks based on their reasoning.

If Agent A asks Agent B for a piece of data, and Agent B decides it doesn’t know the answer but Agent C might, so it routes the request to Agent C. But Agent C’s context window tells it that Agent A is the source of truth for this data, so it routes the request back to Agent A.

You now have a catastrophic recursive loop. The swarm is feeding on itself.

The Rule of A2A Network TTL (Time-To-Live):

Every time an agent payload is passed across an A2A network boundary, the max_hops integer must be decremented by 1.

If any agent receives a payload where max_hops == 0, the infrastructure must immediately hard-fail the request. The LLM is not consulted. The reasoning engine is bypassed. The router rejects the payload and shunts the entire state to a Dead Letter Queue (DLQ).

Python

# Inside the Agent B processing middleware

if incoming_payload.max_hops <= 0:

# Send the failed contract to a database table for human review

dlq.insert(thread_id=current_thread, payload=incoming_payload, reason="Max Hops Exceeded")

return A2AResponse(

status="fatal_error",

agent_reasoning="A2A max_hops limit reached. Potential recursive routing detected. Execution terminated."

)

# If Agent B decides it MUST delegate to Agent C, it decrements the TTL

next_hop_payload = A2ARequest(

intent="FETCH_METADATA",

parameters={"id": user_id},

max_hops=incoming_payload.max_hops - 1 # <-- Decrementing the TTL

)

You are enforcing strict network-level physics on cognitive behavior. No matter how much an LLM “wants” to continue researching a topic or delegating a task, the network firewall cuts the cord. The DLQ then alerts a human engineer (via the Human API we built on Tuesday) to investigate why the swarm got confused and to manually resolve the loop.

The Executive Mandate: API Design is Now Prompt Design

The massive multi-agent enterprise swarms that Microsoft, Salesforce, and IBM are currently selling will only work if your internal architecture is ready to communicate with them.

If you are currently building agents that only know how to output raw Markdown text to a human chat window, you are building legacy software. You are building toys.

To survive the Agentic era, your agents must be able to serialize their intent into strict JSON contracts. They must be able to validate cryptographic caller identities using M2M JWTs. They must be capable of orchestrating compensating transactions via the Saga pattern when things break. And they must obey strict network tracing and max_hop firewall rules.

The future of the enterprise is not a single, god-like AI sitting in a central server. It is a highly choreographed, deterministic swarm of micro-agents trading JSON contracts at the speed of light across dozens of different SaaS platforms.

Stop building chat interfaces.

Stop writing unstructured English prompts.

Start building A2A protocols.

Over to you: How is your team handling agent-to-agent communication? Are you exploring the Model Context Protocol (MCP), or are you building custom JSON routers? Let’s dissect your architecture in the comments below.